Technical challenges and solutions to enable health data standardisation

Hannah Gaimster, PhD

31 August 2023

Introduction to health data standardisation

Currently, there is huge potential for scientific discovery, given the size and rate of growth of health research data. However, researchers and clinicians who want to use this valuable data encounter many difficulties, including time and effort spent preparing data for analysis.

Health data comes from a wide variety of sources. This means it may not use the same organised terminology or be stored in the same format. These differences in how data is stored or described create challenges for researchers preparing data for analyses. There must be sustainable, scalable, and secure methods for storing, accessing, combining, and using this vast data.

Data must be translated into interoperable formats to address these issues with health data analysis. Transformation of health data is the answer.

To address the inconsistent nature of health data, common data models (CDMs) are being used more frequently in the healthcare industry. Several CDMs have grown in popularity in the life sciences sector including Fast Healthcare Interoperability Resources (FHIR) from HL7, the Observational Medical Outcomes Partnership (OMOP) CDM from the OHDSI and Study Data Tabulation Model (SDTM) from CDISC.

When data is standardised to these CDMs, it may be efficiently combined. This makes it more useful than the sum of its parts alone. CDMs allow for sharing of research tools and data across nations, sources, and systems.

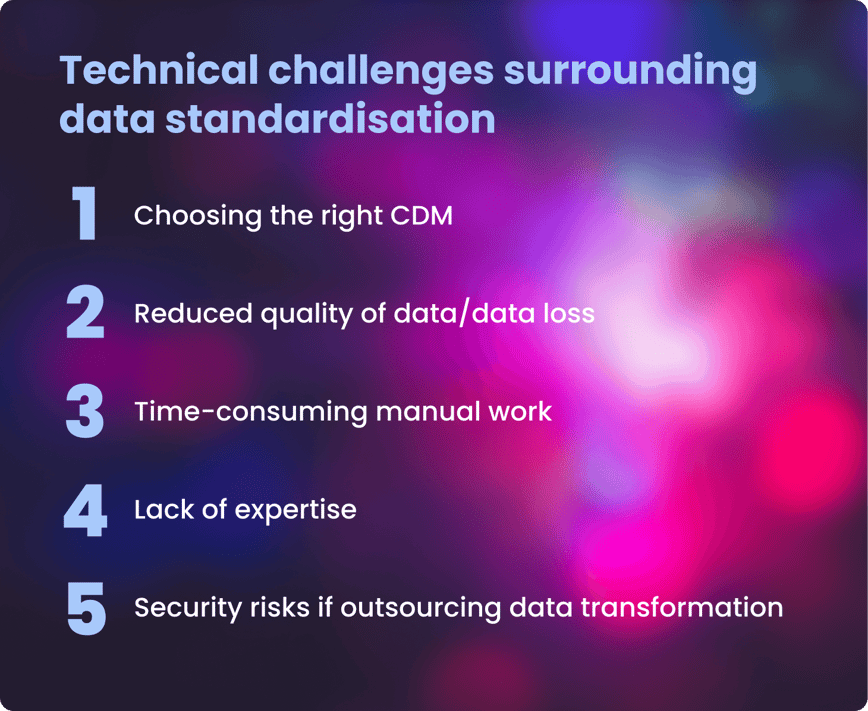

Many benefits can be gained by standardising health data to a standard format, but it is not without technical challenges. This article explores the challenges researchers face when standardising data and presents some solutions being developed.

Technical challenges and solutions to these issues in health data transformation

- Choosing the right common data model (CDM)

-

CHALLENGE

Organisations and individual researchers may favour different medical vocabularies or ontologies.Choosing the most appropriate CDM will depend on a range of factors, including

- the type of data,

- how the data is being collected (eg via a clinical trial or observationally),

- the analyses it is being used for, ease and speed of usage required.

SOLUTION

OMOP is particularly gaining popularity in extensive population-scale health studies worldwide. It should be considered a strong front-runner when an organisation or researcher chooses which CDM to work with.It has emerged as the leading open community CDM, used by biobanks, research institutions and pharmaceutical companies to standardise clinical data.

OMOP CDM, created by the OHDSI community, unifies various data sources by converting them into a uniform structure and utilising a standardised vocabulary. Examples of health organisations utilising OMOP as their CDM:- The National Health Service (NHS) in the UK

- UK Biobank

- Genomics England

- All of Us from the National Institutes for Health (NIH) in the US.

- Lack of expertise in standardising and securely accessing and analysing data.

-

CHALLENGE

Most health data users (64%) lack the knowledge to standardise data quickly. However, the benefits of standardised health data are clear.Furthermore, once data is standardised, users can bring standardised analytical tools to where the data resides in its secure environment. However, access to and analysis of the data must also be harmonised to maximise insights that can be gained.

SOLUTION

This is where end-to-end solutions to standardise, access and analyse health data securely are key.Extraction, transformation, and loading pipelines (ETL) that can automate the standardisation process and convert raw data to analysis-ready data can help simplify this process for researchers.

The software industry is shifting towards "no/low-code" tools. These tools are designed to enable more people to use them, regardless of their background in data science. This is helping to democratise health data and the insights derived fully.

The Galaxy Community, part of ELIXIR, provides a web platform for computational research in various 'omics' fields. These tools enable users of diverse backgrounds to view the data directly or build reproducible pipelines and complex workflows for analyses.

While such low-/no-code tools are a huge first step, there should ideally be an end-to-end, federated solution for researchers and clinicians - providing the latter with the resources they require to understand their patients’ data.

- Reduced data quality and loss

-

CHALLENGE

When health data undergoes harmonisation, the data quality must not be compromised. Data quality is paramount to ensure solid conclusions can be drawn from it. Poor quality data can have a negative impact on patient treatment in the primary healthcare setting, the validity and repeatability of research findings, and the potential value of such data.SOLUTION

Data quality must be assessed following data transformation into a standard format. Standardisation solutions should incorporate data quality checks alongside data transformation.With Lifebit’s Data Transformation Suite, data is harmonised, mapped to existing standards, annotations and ontologies and then interlinked during data ingestion to produce a linked data graph. Quality assurance and control steps are also incorporated, ensuring data quality is not compromised throughout the standardisation process.

- Time-consuming manual work

-

CHALLENGE

Ensuring health data is standardised takes time.According to some estimates, data scientists devote 80% of their work to organising and cleaning data.

SOLUTION

Technological advances, including ETL pipelines and end-to-end solutions, are an ideal solution. These solutions empower researchers and clinicians to spend time on what matters most- gaining novel insights to boost patient outcomes.Lifebit’s Data Transformation Suite uses a set of pipelines that transform raw data into analysis-ready data. These pipelines are automated yet flexible and built to accommodate new data types over time.

- Security risks if outsourcing data transformation

-

CHALLENGE

Third-party providers can help with data transformation and standardisation. However, this often risks data security as the data will require downloading, making it vulnerable to interception.SOLUTION

By employing federated technologies where experts standardise data but it never leaves its jurisdictional boundaries, researchers and clinicians avoid the issues of reduced security and increased costs to standardise data.

Summary

Data harmonisation is important due to ever-increasing data volumes and formats in healthcare. The key to producing longitudinal patient insights is developing data standardisation solutions that ensure data quality and integrity while enabling secure, straightforward and compliant data analysis.

Author: Hannah Gaimster, PhD

Contributors: Hadley E. Sheppard, PhD and Amanda White

About Lifebit

Lifebit provides health data standardisation services for clients, including Genomics England, Boehringer Ingelheim, Flatiron Health and more, to help researchers transform data into discoveries.

Lifebit’s services are making health data usable quickly.

Interested in learning more about Lifebit’s health data standardisation services and how we accelerate research insights for academia, healthcare and pharmaceutical companies worldwide?

Find out more about the value of data standardisation at our upcoming webinar, Data Harmony, on 14 September 2023. Secure your place today.

Featured news and events

2025-03-26 11:17:46

2025-03-14 15:45:18

2025-03-05 12:49:53

2025-02-27 10:00:00

2025-02-19 13:30:24

2025-02-11 08:39:49

2025-01-30 12:47:38

2025-01-28 08:00:00

2025-01-23 09:07:20

2025-01-08 13:58:41

.png)